Loss stagnates at 1.0 during training on custom dataset #776

Replies: 4 comments 10 replies

-

|

After training for 1000 epochs on a glow tts pre-trained model of 300,000 steps, I actually ran into the same issue. What else can I do? |

Beta Was this translation helpful? Give feedback.

-

|

how does the model sound? |

Beta Was this translation helpful? Give feedback.

-

|

I move it to the discussions as it does not conform to the issue template. |

Beta Was this translation helpful? Give feedback.

-

|

From what I've read and understand, the goal is to stop the encoder from training and just train the decoder on the new dataset. How to do that, I have no idea. I wonder if it's even possible only messing with the parameters, might have to mess with some code. If you have any ideas, let me know. I tried doing this using TacoTron2-DDC with some luck. I did about 1000 epochs on the dataset. The voice got clearer, but the overall quality didn't improve at all. |

Beta Was this translation helpful? Give feedback.

Uh oh!

There was an error while loading. Please reload this page.

Uh oh!

There was an error while loading. Please reload this page.

-

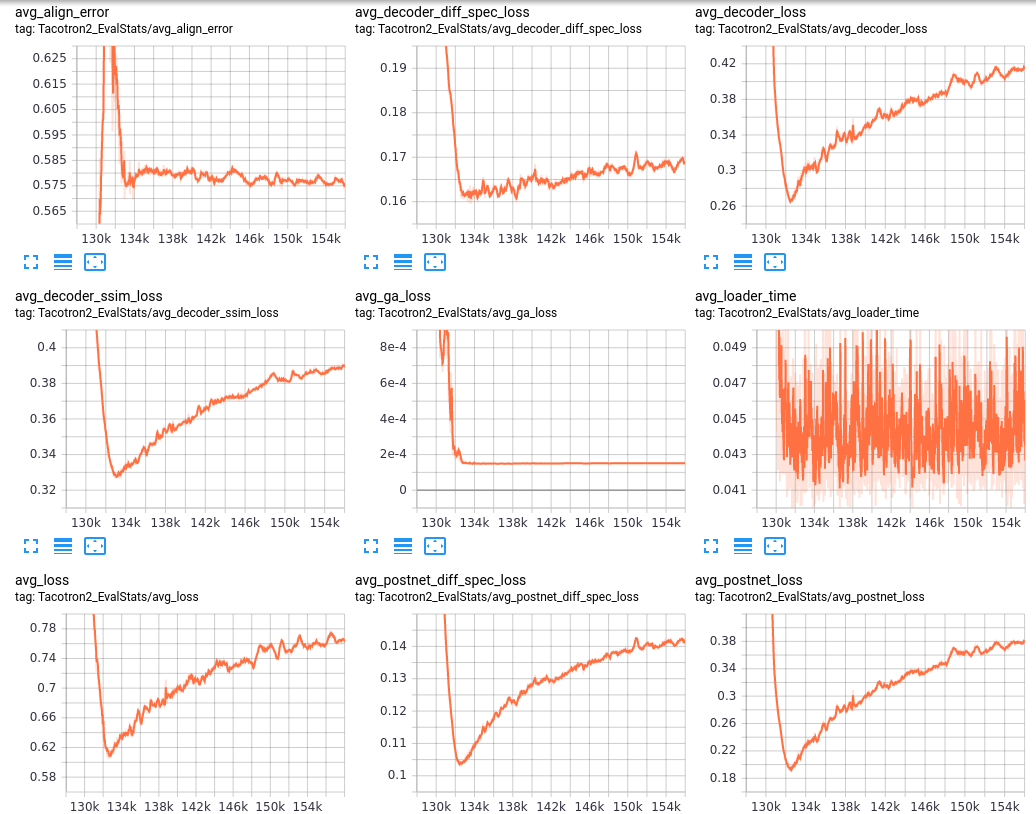

I have been finetuning off a model using Glow TTS on google colab. The training quickly dropped from to 1.0 loss and has increased for the past 1400 steps or so. I made sure to remove any noise or silence from my dataset of 300 samples, and used the analyze spectrograms notebook to check the configurations, so I am lost on what I need to do to make the loss drop further. Do I just need to continue to train?

Tensorboard results:

Configuration:

Dataset files:

https://drive.google.com/drive/folders/1OOIriahYvNPRnK3NMxzH0L1XF_cIGVuM?usp=sharing

Colab notebook:

https://colab.research.google.com/drive/1vYMd3FBpbpnFZeJlSZWCINdERAS_VUSr?usp=sharing

Beta Was this translation helpful? Give feedback.

All reactions